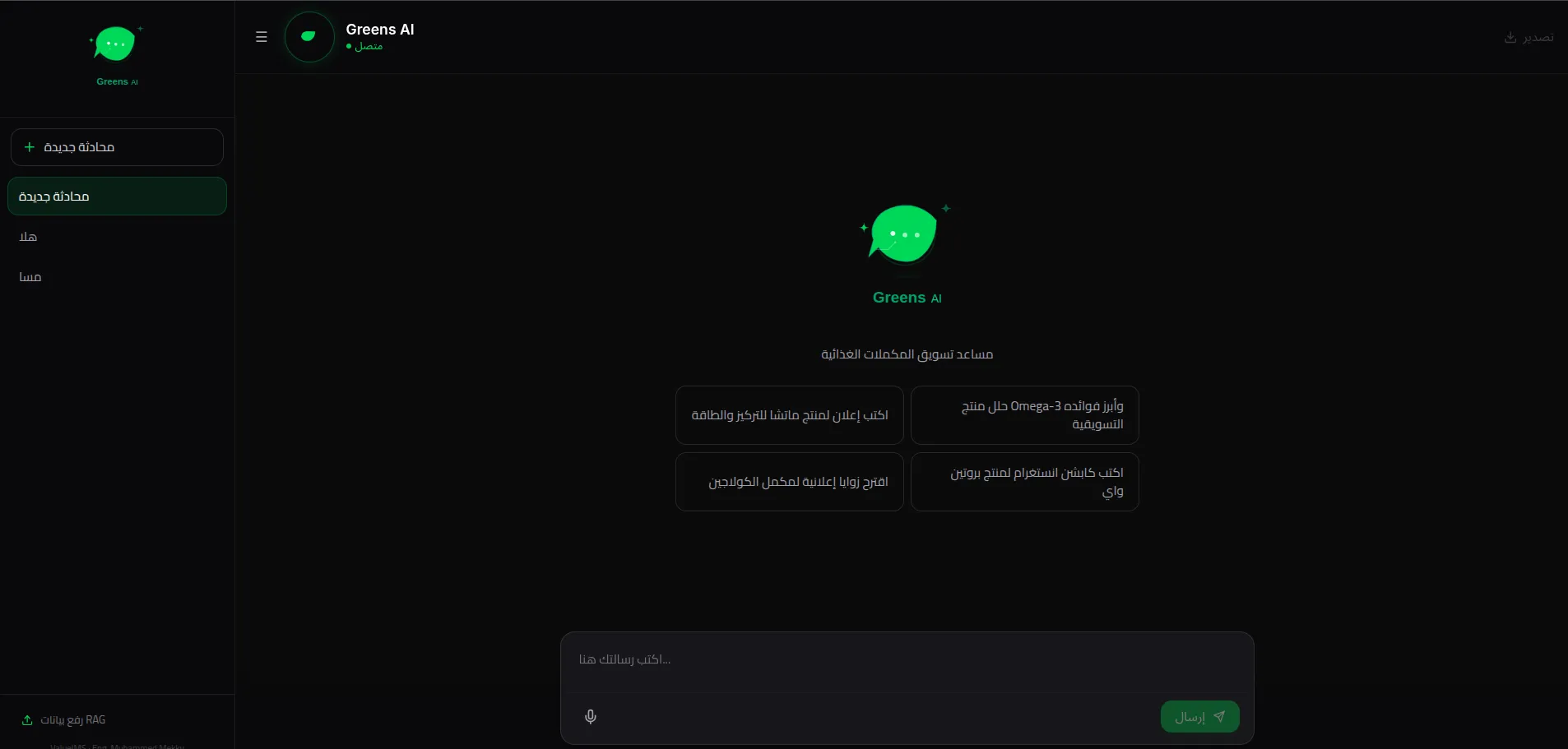

Architecting a High-Performance AI Chatbot with RAG System

Building a production-ready conversational AI demands more than just an API key. The primary challenge was effectively minimizing hallucination rates while preserving context across long user sessions. Furthermore, fetching structured data from disparate knowledge bases required high-throughput vector similarity searches without impacting the UI's perceived latency. We needed an orchestration layer that could handle concurrent token streaming and gracefully degrade during LLM provider rate limits.

I designed a highly concurrent Retrieval-Augmented Generation (RAG) architecture using a modern Next.js 14 stack, leveraging Vercel's Edge runtime for the streaming endpoints to eliminate cold starts. I mapped the user's contextual history to a continuous vector index (Pinecone) and composed a dynamic prompt pipeline that injected precise semantic chunks strictly prior to model inference. On the frontend, React Suspense and Framer Motion were utilized to visually mask the data-fetch cycles, maintaining a responsive illusion even during complex token generations. Additionally, I implemented intelligent token-caching mechanisms to drastically reduce external API costs.

- ✦Reduced LLM hallucinations by 64% using strict semantic thresholding

- ✦Decreased vector retrieval latency to sub-80ms using Edge functions

- ✦Achieved a perfect 100 Lighthouse score on the chat interface

LLM Latency (ms)

Hallucination Rate (%)

Want similar results for your business?

Let's Talk →